Donald Trump behind bars? Russian leader Vladimir Putin kneeling before his Chinese counterpart Xi Jinping? You might have seen what seem to be realistic images like these circulating online, the result of rapid advances in artificial intelligence known as Generative AI.

Some ultrarealistic images of news events have already been mistaken for real ones and shared on social media platforms.

How can you tell a genuine image from a computer-generated one? Visual inconsistencies and a picture’s context can help — but there is no foolproof method of identifying an AI-generated image, specialists told AFP.

Recently developed AI tools such as Midjourney, DALL-E, Craiyon or Stable Diffusion can generate an infinite number of images by drawing on massive databases.

Many people use these tools for humorous or artistic purposes, but others rely on them to fabricate images of political news.

For example, a flood of AI-generated images circulated on Twitter after the meeting between Putin and Xi on March 20, 2023. Others portrayed French President Emmanuel Macron as a garbage collector as rubbish was mounting on Parisian streets amid mass strikes over controversial pension reforms.

Although most creators clearly state these widely shared images are fabricated, other photos circulate with no context or are presented as authentic.

Developers have launched tools such as Hugging Face to try to detect these montages. But the results are mixed and can sometimes be misleading, according to tests by AFP.

Hi, I’m a tech reporter that covers China, cybersecurity, & new media. Hundreds of people are sharing this photo of Xi Jinping & Vladimir Putin as they meet at the Kremlin. The two did meet, but it’s highly likely this photo was generated by an AI program. Here’s why: pic.twitter.com/6xqsDLxiMa

— Amanda Florian 小爱 (@Amanda_Florian)

March 21, 2023

L’#ia#photo a fait des progrès démentiels en très peu de temps, désormais le #fake sera la norme sur les #reseaux, doutez de ce que vous voyez par défaut, plus le choix.#midjourney#midjourneyv5pic.twitter.com/dJoZXGrbRy

— Cryptonoo₿ From 100K to 0 (@Cryptonoobzzzz)

March 19, 2023

“When AI is generating pictures (from scratch), there is in general not a single original image from where parts are taken,” David Fischinger, an AI specialist and engineer at the Austrian Institute of Technology, told AFP on March 21. “There are thousands/ millions of photos that were used to learn billions of parameters.”

Vincent Terrasi, co-founder of Draft & Goal, a startup that launched an AI detector for universities, added: “The AI mixes these images from its database, deconstructs them and then reconstructs a photo pixel by pixel, which means that in the final rendering, we no longer notice the difference between the original images.”

That is why manipulation detection software works poorly, if at all, in identifying AI-generated images. A picture’s metadata, which can sometimes reveal the source of an AI-generated image, is not helpful either.

“Unfortunately you cannot rely on metadata since on social networks they are completely removed,” AI expert Annalisa Verdoliva, professor at the Frederick II University of Naples, told AFP.

Go back to the image source

Experts say one important clue is finding the first time the picture was posted online. In some cases, the creator may have said it was AI-generated and indicated the tool used.

A reverse image search can help by seeing if the picture has been indexed in search engines and finding old posts with the same photo.

This method makes it possible to find the source of images that allegedly show a violent altercation between former US president Donald Trump and police officers arresting him.

A Google reverse image search for one of these pictures leads to a tweet from Eliot Higgins, founder of the investigative collective Bellingcat, published on March 20, 2023.

Higgins explained in a thread that he created the series of images with the latest version of Midjourney.

Making pictures of Trump getting arrested while waiting for Trump’s arrest. pic.twitter.com/4D2QQfUpLZ

— Eliot Higgins (@EliotHiggins)

March 20, 2023

If you can’t find the original photo, a reverse image search can lead to a better-quality version of the picture if it has been cropped or modified while being shared. A sharper photo will be easier to analyse for errors that might reveal a montage.

A reverse image search will also find similar pictures, which can be valuable to compare potential AI-generated photos with those from reliable sources.

For one viral picture allegedly showing Putin kneeling before Xi, Twitter users such as Italian journalist David Puente pointed out that the decor in the room was different from that in pictures published by media covering the event.

Photo captions and online comments can also be useful in recognising a certain style of AI-generated content. DALL-E, for example, is known for its ultrarealistic designs and Midjourney for its scenes showing celebrities.

Some tools, like Midjourney, leave a trace of AI-generated images on different conversation channels.

Visual clues

Even without knowing a photo’s source, you may be able to analyse the image itself using visual clues.

Look for a watermark

Sometimes clues are hidden in the photo, such as a watermark used by some AI creation tools.

DALL-E, for example, automatically generates a multi-coloured bar on the bottom right of all its images. Crayion places a small red pencil in the same place.

But not all AI-generated images have watermarks — and these can be removed, cropped or hidden.

Tips from the art world

Tina Nikoukhah, a doctoral student studying image processing at ENS Paris-Saclay University, told AFP: “If in doubt, look at the grain of the image, which will be very different for an AI-generated photo from that of a real photo.”

Using free versions of AI tools, AFP generated images that had a style similar to paintings of the hyperrealist movement, such as the left-hand example below showing an image of Brad Pitt in Paris.

Another creation below on the right was made with similar keywords on DALL-E. The image is not as obviously AI-generated.

Visual inconsistencies

Despite the meteoric progress in Generative AI, errors still show up in AI-generated content. These defects are the best way to recognise a fabricated image, specialists told AFP.

“Some characteristics, often the same ones, pose a problem for AI. It is these inconsistencies and artefacts that must be scrutinised, as in a game of spot the difference,” said Terrasi of Draft & Goal.

However, Verdoliva of Frederick II University of Naples warned: “Generation methods keep improving over time and show fewer and fewer synthesis artefacts, so I would not rely on visual clues in the long term.”

For example, in March 2023, realistic hands are still difficult to generate. AFP’s AI-generated photo of Pitt shows the actor with a disproportionately large finger.

An AFP journalist pointed out in February 2023 that a police officer had six fingers in a series of pictures allegedly taken during a demonstration against the French pension system reform on March 7, 2023.

The hand strikes again: these photos allegedly shot at a French protest rally yesterday look almost real – if it weren’t for the officer’s six-fingered glove #disinformation#AIpic.twitter.com/qzi6DxMdOx

— Nina Lamparski (@ninaism)

February 8, 2023

“Currently, AI images are also having a very difficult time generating reflections,” Terrasi said. “A good way to spot an AI is to look for shadows, mirrors, water, but also to zoom in on the eyes, and analyse the pupils since there is normally a reflection when you take a photo. We can also often notice that the eyes are not the same size, sometimes with different colours.”

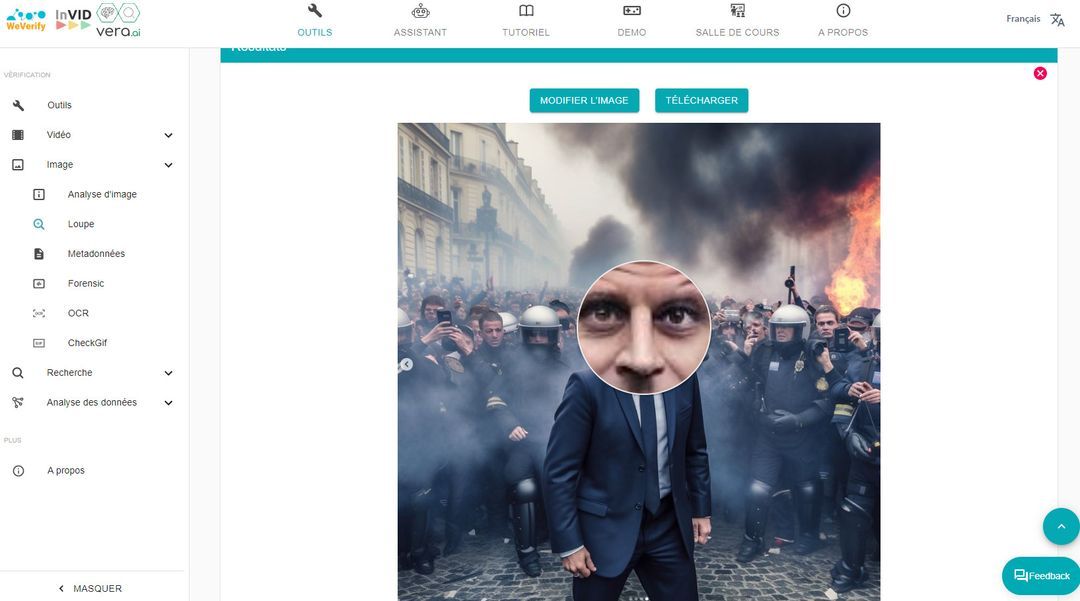

Using the magnifying glass in the Invid-WeVerify tool highlights a colour difference between the two eyes in this AI-generated photo of Macron shared on Instagram. Not only did the picture feature two different shades of brown but Macron actually has blue eyes.

Generators also often create asymmetries. Faces can be disproportionate or ears have different sizes.

Teeth and hair are difficult to imitate and can reveal, in their outlines or texture, that an image is not real.

And some elements can be poorly integrated, such as sunglasses that blend into a face.

Experts also say mixing together several images may create lighting problems in an AI-generated image.

Check the background

A good way to spot these anomalies is to peer into the photo background. While it may at first glance seem normal, an AI-generated photo often reveals errors, such as in these photos allegedly showing Barack Obama and Angela Merkel at the beach.

One of the people in the background appears to have his legs cut off.

“The farther away an element is, the more an object will be blurred, distorted, and have incorrect perspectives,” Terrasi said.

In the fake photo of the meeting between Xi and Putin, a line on a column is not straight. The Russian leader’s head also seems disproportionate compared to the rest of his body. Fischinger told AFP that the inconsistencies revealed an AI-generated image.

Use common sense

Some elements may not be distorted but they can still betray an error of logic. “It’s good to rely on common sense” when you doubt an image, he said.

The photo below, generated by AFP on Midjourney and intended to show Paris, features a blue no-entry sign, which does not exist in France.

This clue, combined with the chopped fingertips of the central character, a plastic-looking croissant and a difference in lighting on some windows, suggests the photo is AI-generated.

The watermark at the bottom right of the image removes any doubt and signals that the shot is from DALL-E.

Finally, if an image claims to show an event but its credibility is in doubt, turn to reliable sources to look for possible inconsistencies.